Artists’ books are works of art that utilize the form of the book. They are often published in small editions, though they are sometimes produced as one-of-a-kind objects. Whilst artists have been involved in the production of books in Europe since the early medieval period (such as the Book of Kells and the Très Riches Heures du Duc de Berry),… Fortsett å lese Artist’s books

Kategori: Art

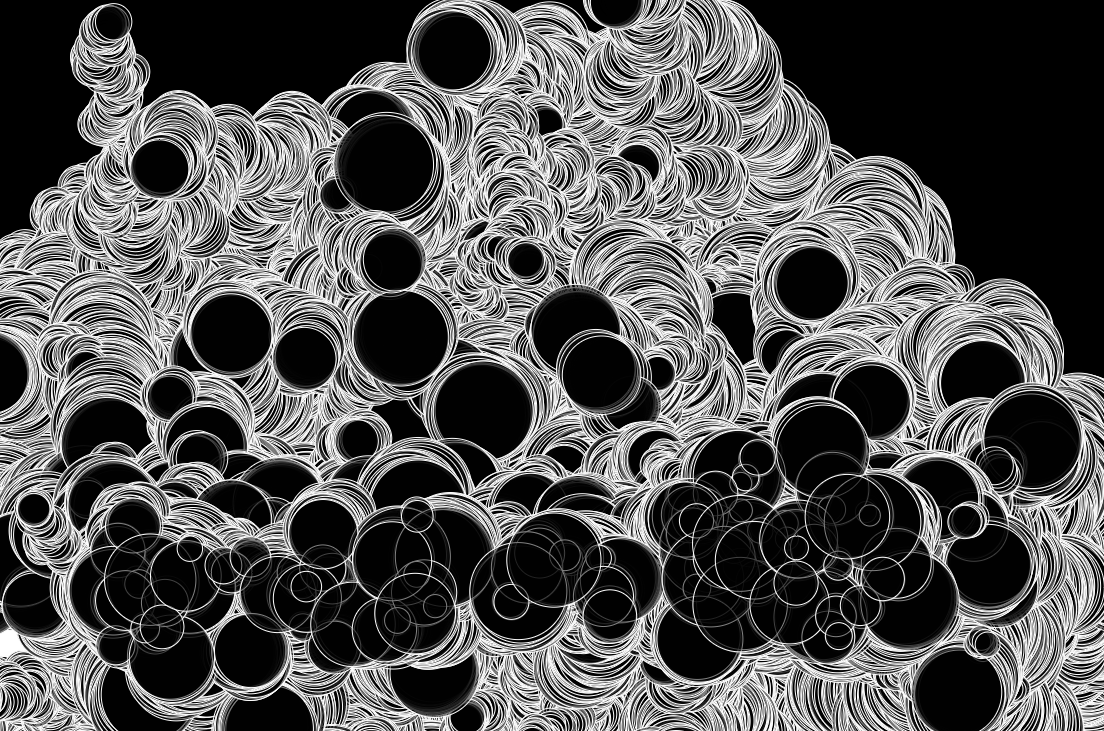

Blowing bubbles

Discovered the wonderful Daniel Shiffman recently, and fell in love with his beginner tutorials on p5js. What a superb educator! Click and drag to draw more bubbles. The max number of bubbles is set to 100, so by drawing new ones you can sort of control what part of the canvas they can move. Click… Fortsett å lese Blowing bubbles

Ideas for Drawing machine #1

My my how time flies. It’s been two years since I built my first drawing machine, a vertical pen plotter. I’ve since then built two more machines using the same hardware, but different sizes, and made over a hundred drawings and experiements. I even had a one month exibition at a tiny local cafe. Nerd… Fortsett å lese Ideas for Drawing machine #1

Project: Sculpture made from 2 million oil barrels

(Featured photo by Sergio Russo) Keywords: Art, oil, environmentalism, activism, civil disobedience. Norway produces approximately 2 million barrels of oil per day, 12% of the worlds production. This project seeks financing and formal approval for building and promoting a sculpture built from the same number of used oil barrels.

Glitching

Some years ago I worked on a series of images based on glitchy frames from the now defunct RealVideo format. Back in the day when high bandwidth still was something rare, RealVideo was great for compressing streaming and ondemand video, but sometimes it would spit out video with strange artifacts, especially when livestreaming. In the… Fortsett å lese Glitching

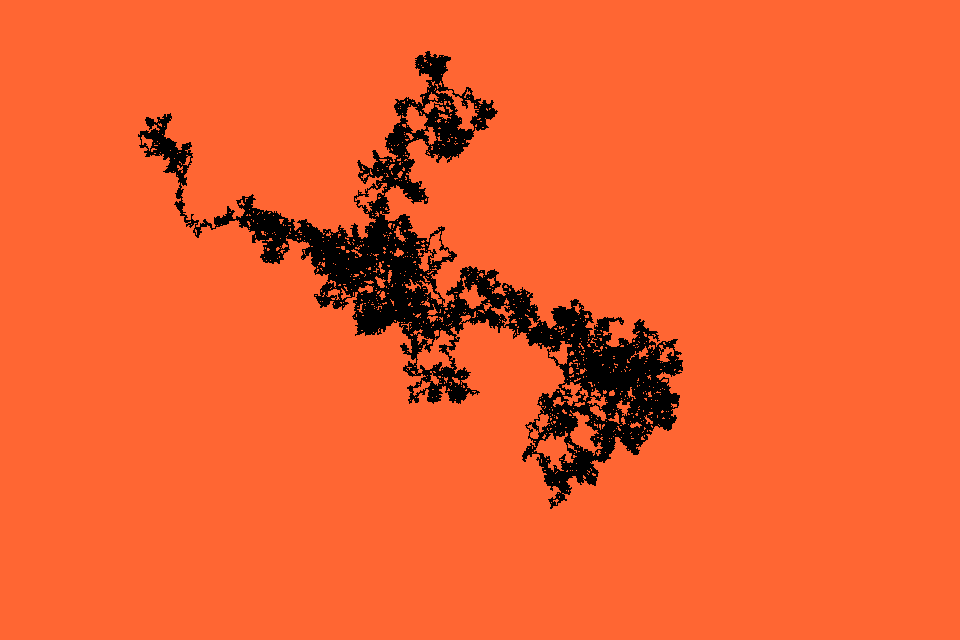

Drawing with JS part 1

A few experiments using javascript to draw or generate graphics. Rune.js Via http://printingcode.runemadsen.com/examples/ See the Pen Rune.js 3 Manipulates the outline of a polygon by changing the position of its vectors. by Morten Skogly (@mskogly) on CodePen. See the Pen Rune.js 8 Loop by Morten Skogly (@mskogly) on CodePen. See the Pen Rune.js 4 Noise… Fortsett å lese Drawing with JS part 1

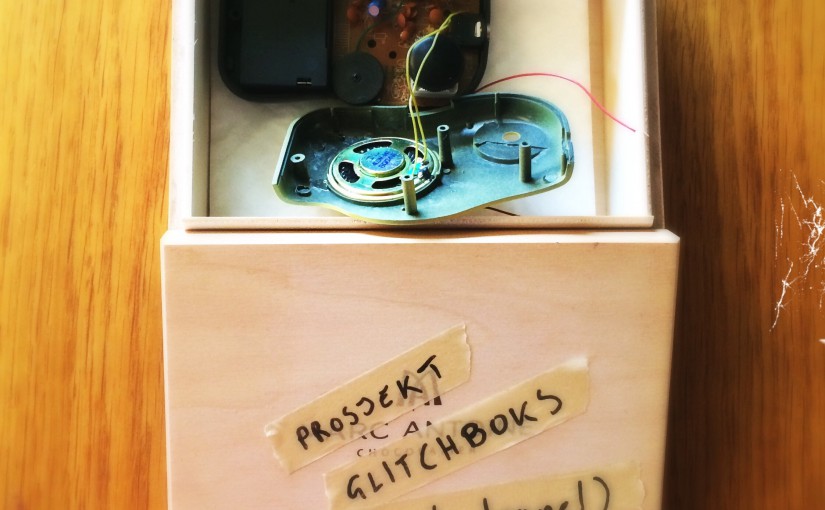

Prosjekt Glitchboks

Gleder meg (nesten) til vinteren, har et par fine små prosjekter på blokka jeg kan koze meg med når snøen kommer.

Agurk, rose, kaktus, sneglehus, sjøstjerne

Makrolinseeksperiment.

Heldagstegninger

Heldagsmøter gir en sjelden ro til å tegne.

Mini DIY: Passive Aggressive Home Decoration

Just until we get around to redecorating properly :)